Main researcher: Marcin Morański, Ph.D.

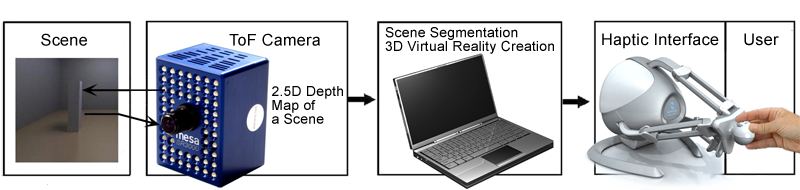

The haptic presentation system was built in order to enable the blind people a touching interaction with 3D real objects created in virtual reality. The prototype consists of an SR3000 camera (Mesa Imaging AG), a laptop and a Falcon Novint haptic interface (Fig. 1). The camera provides information about the distance from obstacles in a scene by calculating the time of flight of the emitted and reflected back light. A 2.5D depth map is calculated at the output. The camera is connected to the remote computer. On a laptop, the depth map is segmented in order to extract all obstacles from the acquired scene. This process allows gathering information (i.e. location and size of objects) that is used to create a virtual scene. Next, the virtual scene is created (Fig. 2). Falcon Novint haptic game controller is used for a tactile exploration of the virtual scenario. Using her/his sense of touch the blind user accesses information about the content of the observed scenes. The system usability was examined by eight blind participants. The performed experiments have proved that the system can be applied for the blind.

>> Leaflet – The haptic presentation system (in Polish, 5MB)